Software That (Almost) Runs Itself

Every generation of software distribution solved one problem and revealed the next. The pattern is consistent: we find a way to package more knowledge into the artefact, and in doing so, we change who needs to know what. Trace that arc from Makefiles to Kubernetes operators, and you see not just a history of technology but a history of trust — who holds the expertise, and where it lives.

By Jurg van Vliet

Source code and tribal knowledge

In the beginning, software shipped as source code. You downloaded a tarball, ran ./configure && make && make install, and hoped your system had the right libraries. The Makefile encoded how to build the software. Everything after that — where to put the config file, how to start the daemon on boot, what to do at 3 AM when the process died — lived in a single place: the sysadmin's head.

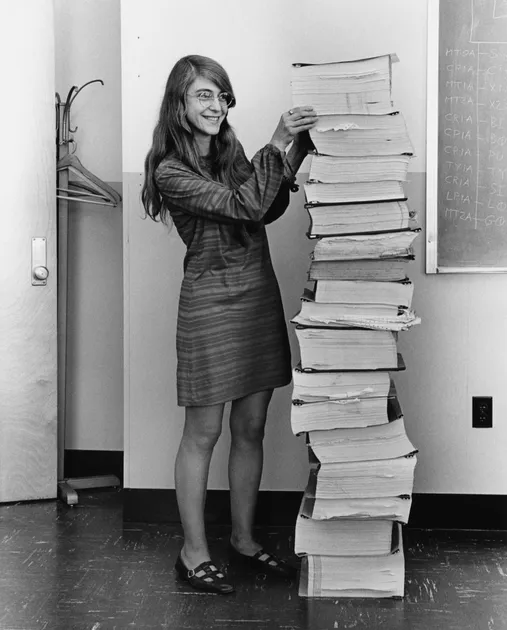

The sysadmin was the expert. She tuned kernel parameters, wrote init scripts, memorized the order in which services had to start. Her knowledge was deep, specific, and almost entirely undocumented. When she left, the knowledge left with her. Package managers — RPM in 1997, dpkg before that — improved distribution by solving dependency resolution and file placement. But they didn't touch the harder problem: how do I run this thing reliably? That stayed tribal. Post-mortems were written, wikis were updated, and nobody read either of them twice.

Portable but not operational

Then, in August 2006, Amazon launched EC2. The unit of deployment shifted from "package on a server" to "entire machine image." AMIs captured not just the application but its runtime environment. When Docker followed in March 2013, it sharpened the idea further: a container image was a self-contained, portable artefact. No more "works on my machine." The application carried its dependencies with it.

This was a genuine revolution in distribution. But portable is not the same as operational. A container image tells you nothing about how many replicas to run, how to route traffic between them, or what to do when one crashes. The complexity didn't disappear. It migrated — from "how do I install this" to "how do I orchestrate this at scale."

The human role shifted with it. In October 2009, Patrick Debois organized the first DevOpsDays conference in Ghent, giving a name to what many teams were already feeling: developers couldn't just throw code over the wall to operations anymore. The cloud had blurred the boundary. SaaS products solved the problem entirely for end users — someone else runs the infrastructure. But for the teams building those platforms, operational burden compounded with every microservice added to the architecture. The sysadmin became the DevOps engineer: someone who understood CI/CD pipelines, container orchestration, and monitoring, not just the OS.

Infrastructure as code, application as mystery

The next leap came in February 2011, when AWS released CloudFormation. For the first time, you could describe infrastructure — servers, networks, load balancers — as a declarative template. Terraform, released by HashiCorp in July 2014, generalized the idea across cloud providers and quickly became the industry standard. (Its open-source fork OpenTofu now continues that work.) Infrastructure became code: reviewable, versioned, reproducible. The DevOps engineer became the cloud engineer — someone who thought in resource graphs and state files rather than SSH sessions and shell scripts.

But Infrastructure as Code has a blind spot, and it's a fundamental one. It describes what infrastructure should exist: this many instances, this network topology, this database cluster. It does not describe how the applications running on that infrastructure should behave. Terraform can provision a PostgreSQL cluster. It cannot promote a replica when the primary fails. It can create Kubernetes nodes. It cannot safely restart a stateful service that holds time-series data in memory.

Infrastructure as Code answers "what should exist." It is silent on "what should happen next."

The complexity cliff

That silence becomes deafening as software grows more complex. Consider a production deployment of Grafana Mimir, a horizontally scalable metrics backend. It runs as eight distinct components: distributors, ingesters, queriers, query-frontends, query-schedulers, compactors, store-gateways, and rulers. Each component scales independently. Each has different failure modes. The ingester holds recent metric data in memory, organized by tenant. Roll it carelessly during an upgrade — kill the pod before it flushes to long-term storage — and those samples vanish. The store-gateway needs time to load blocks from object storage before it can serve queries. Restart it too early and dashboards show gaps.

No Makefile builds this operational understanding. No container image encodes it. No Terraform module captures the safe order in which to restart eight interdependent components across three availability zones. The knowledge lives, as it always has, in human heads — and in runbooks that grow stale faster than anyone can maintain them.

This is the gap the Kubernetes operator pattern fills.

Ship the expertise with the software

In November 2016, engineers at CoreOS published a blog post titled "Introducing Operators: Putting Operational Knowledge into Software." The insight was direct: the best people to automate a database are the people who built the database. They know the failure modes. They know the recovery sequences. They know which upgrade paths are safe and which corrupt data. Instead of writing all that down and hoping someone follows the instructions, encode it in a program that runs alongside the application. Ship the expertise with the software.

An operator is a controller — a long-running process in the Kubernetes cluster that watches the desired state of a resource, compares it to reality, and reconciles the difference. This sounds like Terraform, but the distinction matters. Terraform runs when you invoke it, then stops. An operator runs continuously. It doesn't just create infrastructure; it reacts to drift, failure, and change in real time.

Grafana Labs ships a concrete example of this idea with their Mimir Helm chart: the rollout-operator. Its scope is narrow — it manages the safe rollout of stateful components during upgrades in zone-aware deployments — but within that scope, it replaces an entire runbook.

When Mimir runs with zone-aware replication across three availability zones, an upgrade must proceed one zone at a time. Update zone A, wait for those ingesters to become ready and catch up on replication, then move to zone B, then zone C. At no point should two zones degrade simultaneously. The rollout-operator coordinates this sequence automatically. It defines two custom resource types — ZoneAwarePodDisruptionBudgets and ReplicaTemplates — that extend the Kubernetes API with Mimir-specific operational concepts. The budget ensures zone-safe disruption constraints. The template maintains consistent replica counts per zone during the transition.

A human operator could do all of this by hand. Read the docs. Run kubectl commands in the right order. Watch logs. Wait. Proceed. But the human does it once per upgrade, under pressure, possibly at 2 AM, and gets it wrong one time in twenty. The rollout-operator does it every time, identically, because it is the procedure.

Knowledge that compounds

The pattern has spread far beyond monitoring backends. CloudNativePG encodes PostgreSQL DBA knowledge: when a streaming replica falls behind, it promotes a new one, reconfigures replication, and updates service endpoints — the same decision tree a senior DBA would follow, executed in seconds rather than the minutes it takes a human to context-switch and assess the situation. The Istio operator manages service mesh configuration. Strimzi manages Kafka clusters. The CNCF Operator Framework and Kubebuilder have made it straightforward to build new ones, and Helm charts increasingly bundle operators as dependencies.

This changes what gets distributed. Software is no longer just an image and documentation. It's an image, an operator, and a set of custom resource definitions that extend Kubernetes with domain-specific vocabulary. When you install Mimir, you don't just get containers. You get new API objects that represent Mimir's operational model. The platform becomes aware of concepts — zones, replicas, rollout safety — that it previously had no language for.

The compounding effect is the most significant consequence. When the CloudNativePG team encounters a new failure mode in production, the fix becomes a patch to the operator's logic. It ships to every deployment that upgrades, not just the cluster that burned. Operational knowledge accumulates in code, not in runbooks that age into fiction.

The platform engineer

The human role has shifted once more. The platform engineer — the title gaining traction now — doesn't manually configure Mimir or write custom rolling-update scripts. She selects operators, configures their custom resources, and trusts them to encode the expertise she once carried herself. Her job is to compose the right operators, define the right constraints, and intervene when automation reaches its limits.

Each era eliminated a category of manual work and demanded a different kind of expertise. The sysadmin knew machines. The DevOps engineer knew pipelines. The cloud engineer knew resource graphs. The platform engineer knows operators. The expertise didn't diminish — it shifted to a higher level of abstraction.

The cost of delegation

Operators are not free, and it would be dishonest to pretend otherwise.

They add moving parts. An operator bug can cause more damage than a manual mistake, precisely because it acts faster and at broader scale. An operator that loses its watch connection to the Kubernetes API may misread the current state and make destructive decisions with full confidence.

The model concentrates trust. When the rollout-operator decides the order in which to restart your ingesters, you trust that Grafana Labs tested that sequence against your topology. They usually did. But sometimes your deployment is the edge case nobody anticipated.

And the complexity doesn't vanish — it transforms. You no longer need to know how to manually coordinate a zone-by-zone rollout. But you need to understand custom resource definitions, admission webhooks, RBAC permissions, and the failure modes of the operator itself. The old complexity was chaotic and human-dependent. The new complexity is structured and machine-dependent. Both can fail you.

Where this goes

Still, the trajectory is clear. Software distribution converges on a model where the artefact and its operational knowledge ship as one unit. The DBA's expertise lives in the PostgreSQL operator. The SRE's upgrade checklist lives in the rollout-operator. The network engineer's traffic-shifting logic lives in the service mesh controller.

This doesn't eliminate human judgment. Someone must still decide what to run, why to run it, and when the automated decisions are wrong. But the gap between "I want to run this" and "this runs correctly in production" narrows with every operator that ships.

The deepest shift may be cultural. When software knows how to run itself, the vendor's responsibility extends beyond the artefact. Shipping a container image without an operator starts to feel like shipping a car without a transmission — technically complete, practically useless for most drivers. The best infrastructure teams already think this way. The rest will follow, because the alternative — shipping ever-growing complexity without encoding the expertise to manage it — stopped scaling years ago.